From phishing to bias: Study maps the hidden threats behind large language models

FAYETTEVILLE, GA, UNITED STATES, December 25, 2025 /EINPresswire.com/ -- Large language models (LLMs) have become central tools in writing, coding, and problem-solving, yet their rapidly expanding use raises new ethical and security concerns. The study systematically reviewed 73 papers and found that LLMs possess dual roles—empowering innovation while simultaneously enabling risks such as phishing, malicious code generation, privacy breaches, and misinformation spread. Defense strategies including adversarial training, input preprocessing, and watermark-based detection are developing but remain insufficient against evolving attack techniques. The research highlights that the future of LLMs will rely on coordinated security design, ethical oversight, and technical safeguards to ensure responsible development and deployment.

Large language models (LLMs) such as generative pre-trained transformer (GPT), bidirectional encoder representations from transformers (BERT), and T5 have transformed sectors ranging from education and healthcare to digital governance. Their ability to generate fluent, human-like text enables automation and accelerates information workflows. However, this same capability increases exposure to cyber-attacks, model manipulation, misinformation, and biased outputs that can mislead users or amplify social inequalities. Academic researchers warn that without systematic regulation and defense mechanisms, LLM misuse may threaten data security, public trust, and social stability. Based on these challenges, further research is required to improve model governance, strengthen defenses, and mitigate ethical risks.

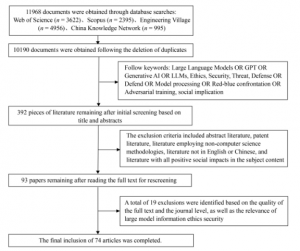

A research team from Shanghai Jiao Tong University and East China Normal University published (DOI: 10.1007/s42524-025-4082-6) a comprehensive review in Frontiers of Engineering Management, (2025) examining ethical security risks in large language models. The study screened over 10,000 documents and distilled 73 key works to summarize threats such as phishing attacks, malicious code generation, data leakage, hallucination, social bias, and jailbreaking. The review further evaluates defense tools including adversarial training, input preprocessing, watermarking, and model alignment strategies.

The review categorizes LLM-related security threats into two major domains: misuse-based risks and malicious attacks targeting models. Misuse includes phishing emails crafted with near-native fluency, automated malware scripting, identity spoofing, and large-scale false information production. Malicious attacks appear at both data/model level—such as model inversion, poisoning, extraction—and user interaction level including prompt injection and jailbreak techniques. These attacks may access private training data, bypass safety filters, or induce harmful content output.

On defense strategy, the study summarizes three mainstream technical routes: parameter processing, which removes redundant parameters to reduce attack exposure; input preprocessing, which paraphrases prompts or detects adversarial triggers without retraining; and adversarial training, including red-teaming frameworks that simulate attacks for robustness improvement. The review also introduces detection technologies like semantic watermarking and CheckGPT, which can identify model-generated text with up to 98–99% accuracy. Despite progress, defenses often lag behind evolving attacks, indicating urgent need for scalable, low-cost, multilingual-adaptive solutions.

The authors emphasize that technical safeguards must coexist with ethical governance. They argue that hallucination, bias, privacy leakage, and misinformation are social-level risks, not merely engineering problems. To ensure trust in LLM-based systems, future models should integrate transparency, verifiable content traceability, and cross-disciplinary oversight. Ethical review frameworks, dataset audit mechanisms, and public awareness education will become essential in preventing misuse and protecting vulnerable groups.

The study suggests that secure and ethical development of LLMs will shape how societies adopt AI. Robust defense systems may protect financial systems from phishing, reduce medical misinformation, and maintain scientific integrity. Meanwhile, watermark-based traceability and red-teaming may become industry standards for model deployment. The researchers encourage future work toward AI responsible governance, unified regulation frameworks, safer training datasets, and model transparency reporting. If well-managed, LLMs can evolve into reliable tools supporting education, digital healthcare, and innovation ecosystems while minimizing risks linked to cybercrime and social misinformation.

References

DOI

10.1007/s42524-025-4082-6

Original Source URL

https://doi.org/10.1007/s42524-025-4082-6

Funding Information

This study was supported by the Beijing Key Laboratory of Behavior and Mental Health, Peking University, China.

Lucy Wang

BioDesign Research

email us here

Legal Disclaimer:

EIN Presswire provides this news content "as is" without warranty of any kind. We do not accept any responsibility or liability for the accuracy, content, images, videos, licenses, completeness, legality, or reliability of the information contained in this article. If you have any complaints or copyright issues related to this article, kindly contact the author above.